According to McKinsey, over 60% of consumers now use AI search to research, compare, and decide what to buy. And this percentage is only increasing with every passing day. That means if your product isn’t being recommended by AI tools, a growing chunk of your buyers will never know it exists.

These trends led us to spend months reverse-engineering how ChatGPT, Claude, Gemini, Perplexity, Grok, and Reddit Answers decide what to surface. We studied leaked system prompts, patent filings, backend configurations, and dev console logs, as well as ran our own research experiments in these tools.

This guide breaks down the basics of what we found. Spoiler: Every LLM works a little differently, but the same patterns keep showing up in the content that gets cited.

While we can’t guarantee you a citation in a given AI answer, we can walk you through those patterns and what you can do about them to build a GEO foundation that will put you ahead of the competition.

TL:DR

- Each LLM searches the web differently — ChatGPT uses Bing, Claude uses Brave, Gemini uses Google, Grok pulls from X — but the same patterns show up in content that gets cited across all of them.

- The core strategies: write with hard specifics that match how people prompt LLMs, lead with the answer, structure content so it chunks cleanly, build topical depth across multiple pages, keep everything fresh, make your site accessible to AI crawlers, build a consistent brand entity, and earn credibility through third-party mentions.

- None of this guarantees a citation, but it gives you the strongest foundation to work from.

The General Principles of How LLMs Work

Large Language Models (LLMs) are the AI systems behind tools like ChatGPT, Claude, Gemini, Perplexity, Grok, and Reddit Answers.

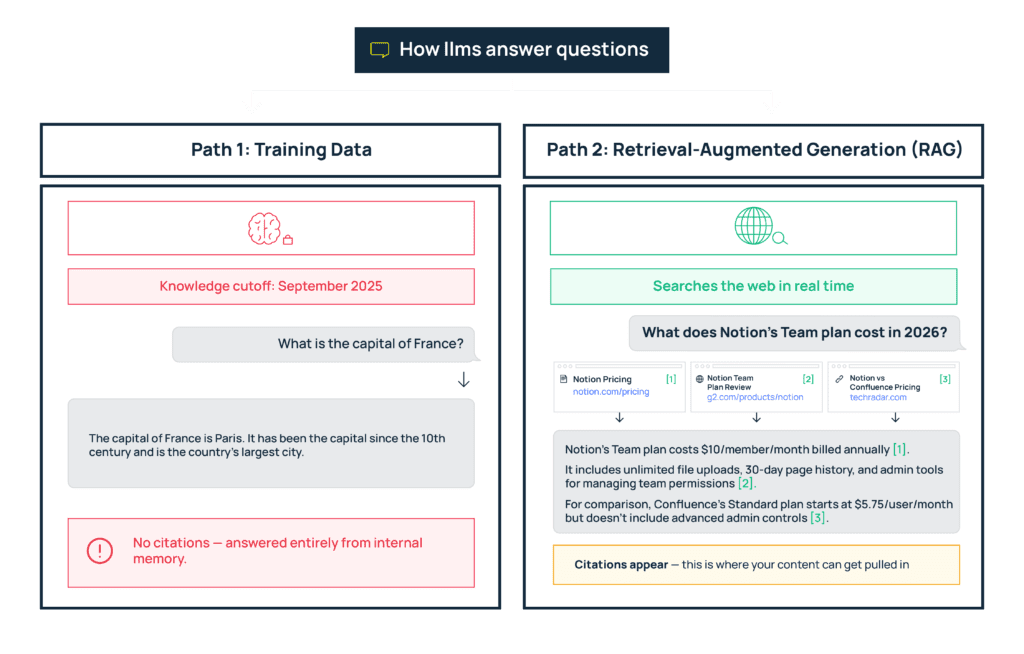

When you ask one of these tools a question, it creates an answer using two general approaches. One, it goes back to the data it has already processed (training data). This allows it to address questions with consistent answers –– “What is the capital of France?” –– quickly and easily.

Two, it will search the web. This helps it answer questions that are not in its historical training data –– “Who won the 2026 Oscar for best actor? –– or questions that need multiple layers of up-to-date context –– “What is the best SEO plugin for WordPress in 2026?”

LLMs typically search the web through retrieval-automated generation (RAG). It’s the system that pulls relevant content from external sources like web pages and databases, and passes it to the LLM so it can base its response on factual, most current information.

You can’t influence training data, which is already in LLM systems. But you can aim to influence LLM citations for questions where it has to search the web for new information.

While each LLM searches slightly differently (ChatGPT and Perplexity pull from Bing, for example, while Gemini uses Google’s index), there are steps you can take to help your content get picked up by any LLM. That’s because, like search engines, these systems all respond to similar content signals even if they don’t have 100% overlap in ranking or citation factors.

Strategies That Improve Your Chances of Ranking in LLMs

After studying 6 major LLMs, we found the same patterns showing up across brands that get cited. The strategies below are based on those patterns.

1. Write With Specifics That Match How People Prompt LLMs

Users give LLMs very detailed prompts to work with, averaging 15 words per query. For example, instead of “best CRM for small teams,” they now write “best CRM for 5-10 person HR teams under $50 per member with Slack and BambooHR integrations.”

That’s a lot of specifics the LLM needs to match. To give LLMs confidence that your content answers these types of queries, mention these types of details explicitly.

For example, if your page says “built for small teams, with powerful integrations and affordable pricing,” you might technically answer a user’s query. But an LLM is less likely to cite you because you don’t list details of team size, integrations, or cost. Vague language gives the LLM no reason to think your page satisfies the user’s intent.

What to do:

- Spell it out. Instead of “affordable pricing and powerful integrations,” write something like “Our plans start at $29/member/month for teams of up to 15.” “Our solution connects directly with 12 tools: Slack, BambooHR, Greenhouse…”

2. Lead With the Answer

44.2% of all citations in LLMs come from the first 30% of a page, so getting to the point matters a lot.

For example, an LLM is trying to answer the query “How to Set Up SSO for Remote Teams”. If you first explain what SSO is and why security matters before getting to the actual setup steps, and your competitor answers in the first two paragraphs, your competitor is more likely to be cited.

What to do:

- Start with a clear, direct answer to the question the page is about.

- Use a TL;DR that gives someone the takeaway of your page in seconds

- Fill out the second half of your article with details and context that round out what someone would want to know if they were searching your topic more in-depth.

3. Structure Your Content So LLMs Can Chunk It Cleanly

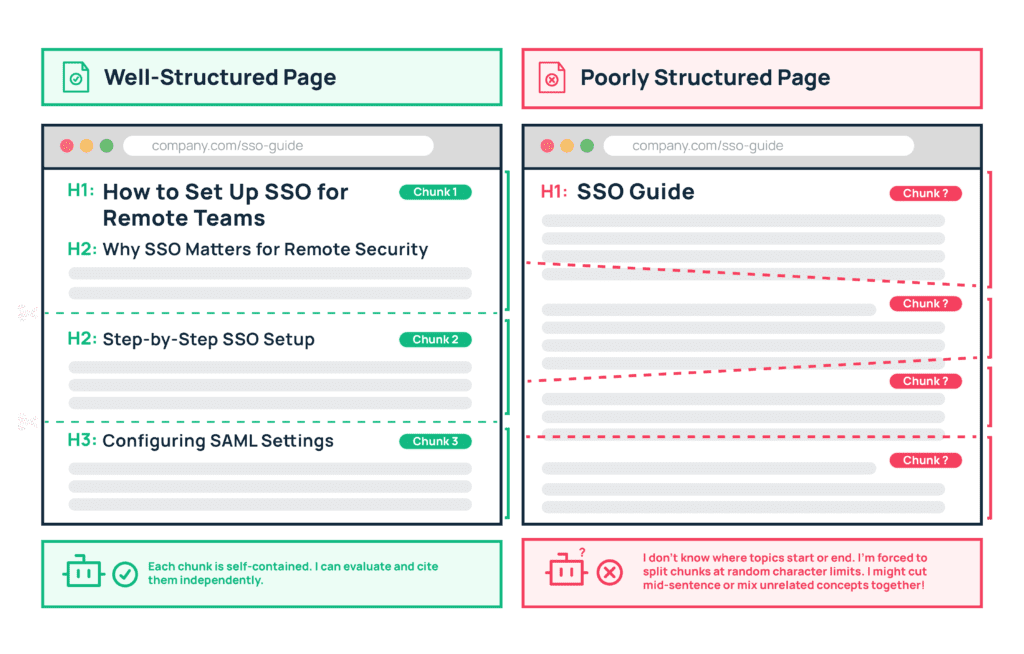

LLMs break your content down into chunks and evaluate each one separately. Google, for example, creates chunks of up to 200 words, with a maximum of 30 chunks per page. So every section of your content needs to work as a standalone unit.

What to do:

- Write in short paragraphs that are self-contained, only covering one major point per section.

- LLMs decide where to split your content into chunks based on your heading structure — your H1s, H2s, and H3s. So keep those heading levels in logical order (H1 → H2 → H3) without skipping any.

- Use tables, FAQs, and bulleted lists to create natural break points that make it easier for LLMs to extract your main points.

4. Build Topical Depth

When someone asks an LLM a question, it rewrites the prompt into multiple variations (a process called query fan-out) and looks for pages that show up consistently across them.

For example, if a user asks “how to automate employee onboarding,” the LLM might also search for “best onboarding automation tools,” “onboarding workflow templates,” “how to set up automated welcome emails for new hires,” and more.

Sites that show up across several of these related searches are more likely to be cited in the final answer than sites that only appear for one.

What to do:

- Build topic clusters. Have a hub page for the main concept, supported by pages covering comparisons, integrations, use cases, pricing, workflows, and edge cases. Each page gives the LLM another entry point to find you across different variations of the same question.

5. Keep Content Visibly Fresh

Every LLM we studied uses some form of freshness check when choosing where to pull information from.

ChatGPT has an always-on freshness scoring filter. Claude adds year markers to its web searches even when the user doesn’t mention a year in their prompt. Perplexity gradually lowers the weight of older pages over time.

They all want to give users the most accurate, up-to-date information possible, and outdated content is one of the easiest reasons to drop a page.

What to do:

- Refresh your high-value pages quarterly. Update pricing, examples, screenshots, and timestamps.

- Make the update visible with a clear “last updated” date, synced sitemaps, and real content changes.

6. Make Your Site Technically Accessible to AI Systems

If your site isn’t accessible to an LLM’s crawler, it can’t read your pages or index your information. That means you will never show up in an LLM’s answer, no matter how good your content is.

In order to maximize your GEO, you will need to ensure that your site can be found by the different search backends LLMs use to find content. For example, Claude pulls from Brave while ChatGPT and Perplexity use Bing.

What to do:

- Check your robots.txt to make sure you’re not accidentally blocking AI crawlers from accessing important pages.

- Consider adding an llms.txt file to guide them toward your most valuable content.

- Serve key information in plain HTML — if it’s buried behind JavaScript or locked in PDFs, crawlers can’t read it.

- Maintain fast load times and keep sitemaps updated.

Learn more about how LLM indexing works in our detailed guide here: How LLMs Index Your Site

7. Get Third-Party Mentions to Build Credibility

LLMs check what other sources across the web say about your product to verify your credibility before recommending it to users.

Perplexity, for example, has a list of domains it considers trustworthy for specific industries. For SaaS, that includes places like GitHub, Stack Overflow, and Crunchbase. So if your product is discussed in a Stack Overflow thread, referenced in a GitHub repo, or listed on Crunchbase, Perplexity treats your brand as more credible and is more likely to surface you in its answers.

What to do:

- Work on getting your brand featured in category roundups, industry publications, and review platforms.

- Contribute to communities where your expertise is relevant.

- Publish original research that others want to cite, to gain backlinks–– and therefore credibility –– on trusted domains.

8. Use Structured Data and Schema Markup

Schema markup is code in your HTML that labels your content for machines — tagging sections as FAQs, how-to guides, product pages, and so on. It tells LLMs exactly what type of content they’re looking at, so they can retrieve the right pages for the right queries faster.

If it’s missing, LLMs have to figure out what the content is from the text alone, which increases the chance of your page getting misidentified and dropped during the quality filtering stage.

What to do:

- Implement Organization, Product, FAQ, HowTo, and SoftwareApplication schema across your site where relevant. Make sure it’s validated and accurate — broken or outdated schema does more harm than having none at all.

For a step-by-step walkthrough, read: What Schema Markup Is and How Anyone Can Implement It on SaaS Websites

9. Build a Consistent Brand Entity Across the Web

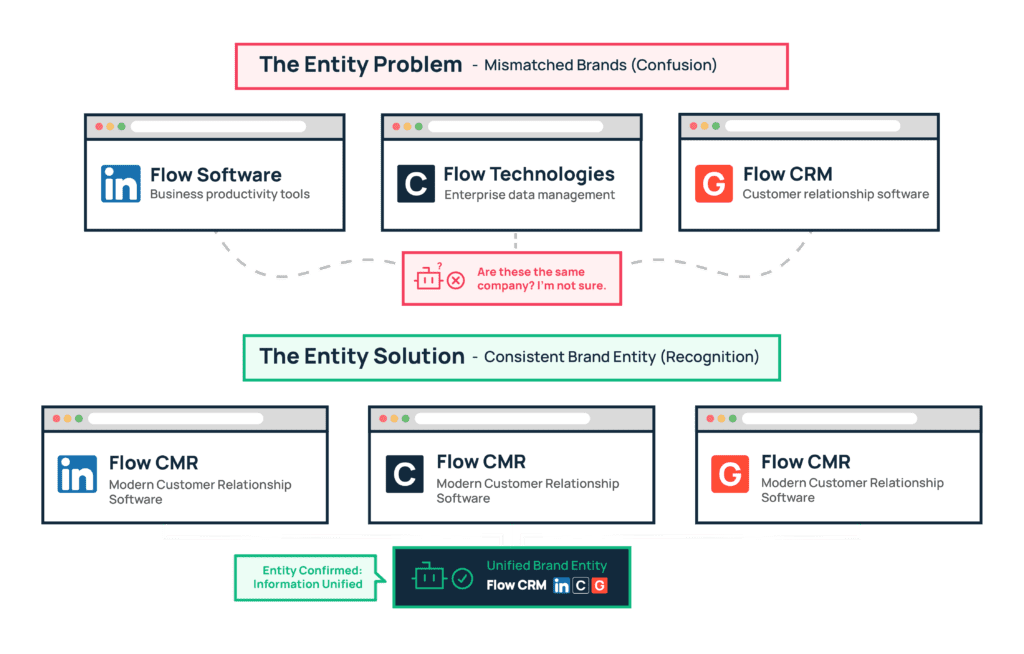

If your brand shows up as Flow CRM on LinkedIn, Flow Software on Crunchbase, and Flow Technologies on G2, an LLM may not be able to tell that these are all the same company. To get LLMs to recognize your brand and product, and therefore confidently recommend you, work towards becoming an entity.

An entity, in LLM terms, is how the system recognizes your brand as a distinct, known thing – separate from other companies with similar names or descriptions.

What to do:

- Standardize your brand name, tagline, and category descriptions across every important public profile — your website, LinkedIn, Crunchbase, G2, Product Hunt, and anywhere else your brand appears.

- On your own site, use back-end signals to help LLMs recognize your entities. For example, Organization and Product schema (structured code that explicitly tells machines “this is a company” or “this is a product” along with key details like name, description, and category) with sameAs links (which are references in that code that point to your official profiles elsewhere on the web) pointing to those profiles so LLMs can connect the dots.

For a deeper look at how LLMs process entities and how to build yours, read: What Is an Entity in LLMs and How Your Product or Company Can Become One.

Major Differences in How Each LLM Ranks Your Content

A lot of how LLMs work follows the same general principles we’ve covered above. But each one has differences worth knowing about, because they affect what you prioritize and how you show up in a given platform. Here’s a quick look at how each one works:

How ChatGPT Ranks Your Content

Searches through: Bing

Key difference:

- ChatGPT rewrites your prompt into multiple variations and merges the results. If your site ranks #5 for ten variations, it is more likely to be cited than ranking #1 for just one query.

- It also runs a freshness filter on every query, so pages that haven’t been updated recently lose ground to ones that have.

- For decision-level queries like “best CRM for startups,” it tends to cite third-party sources like review sites and comparison articles over the product’s own website.

Prioritizes: Freshness, topical breadth, third-party mentions.

Read our full breakdown: How ChatGPT’s Ranking Algorithm Works

Read our strategy guide: How to Get Mentioned in ChatGPT

How Claude Ranks Your Content

Searches through: Brave – a smaller search engine that doesn’t have the high-authority domain bias you see in Google or Bing.

Key difference:

- When Claude searches, it rewrites the user’s query into increasingly specific sub-searches, narrowing down until it finds pages that match the exact detail it needs.

- It only cites a source when the information is too specific to paraphrase without risking accuracy.

- Smaller and newer brands have a real opportunity here, because Brave’s index gives them visibility they wouldn’t get through Google.

Prioritizes: Specific, hard-to-paraphrase details — exact numbers, steps, data points.

Read our full breakdown: How Claude Ranks Your Content

Read our strategy guide: How to Get Mentioned in Claude

How Gemini and Google AI Mode Rank Your Content

Searches through: Google’s own index

Key difference:

- Before Google’s AI even starts looking for content, it builds a profile of the person asking based on their past searches, clicks, location, and device. That profile shapes which results it considers relevant, meaning the same question asked by two different people can surface completely different sources.

- Based on that profile, it pulls passages from across the web to build a mini-index (called a custom corpus) just for that question. Then it compares those passages head-to-head to pick the ones that best match the user’s intent, and assembles them into a final answer.

Prioritizes: Content that closely matches a specific audience’s problems, workflows, and language.

Read our full breakdown: How Gemini and Google AI Mode Rank Your Content

Read our strategy guide: How to Get Mentioned in Gemini and Google AI Mode

How Perplexity Ranks Your Content

Searches through: Bing’s API, merged with its own cache of previously indexed pages

Key difference:

- Perplexity maintains a list of domains it considers inherently trustworthy for specific industries – for SaaS, that includes GitHub, Stack Overflow, and Crunchbase. Pages connected to those domains get a trust boost.

- It also rewards sites that have multiple connected pages around the same theme.

- There’s also a YouTube signal – when a query that’s trending on Perplexity has a YouTube video with an exact title match, both the video and related web content get a visibility boost.

- Unlike other LLMs, it actively monitors engagement after citing you – it runs rolling 7-day checks, and if users keep seeing your page in answers but never click through, it stops mentioning/citing you.

Prioritizes: Trusted domain connections, topical depth across linked pages, ongoing engagement

Read our full breakdown: How Perplexity’s Ranking Algorithm Works

Read our strategy guide: How to Get Mentioned in Perplexity

How Grok Ranks Your Content

Searches through: Training data + live signals from X (formerly Twitter)

Key difference:

- Grok doesn’t crawl the web or maintain a search index. It pulls from what’s happening on X in real time. When a topic trends or a post gains traction, Grok reflects it almost instantly.

- Credibility here comes from engagement – replies, threads, and discussions that show real people finding your content useful.

Prioritizes: Timely, conversational content backed by X engagement

Read our strategy guide: How to Get Mentioned in Grok

How Reddit Answers Ranks Your Content

Searches through: Existing public Reddit threads and comments

Key difference:

- Reddit Answers only pulls from public, well-moderated subreddits. It cites posts and comments equally, so a detailed reply carries as much weight as a standalone post.

- Credibility comes from Reddit karma (a reputation score based on the upvotes and downvotes your contributions receive), post history, and following each subreddit’s rules.

- Contributions made in Reddit threads feed into almost every LLM we’ve discussed above (except Grok). They all pull from Reddit threads when building their responses, meaning any well-written post/comment on Reddit can end up cited across multiple AI platforms at once.

Prioritizes: Genuine, detailed contributions from credible community members

Read our strategy guide: How to Show Up in Reddit Answers

Final Thoughts On How To Rank In LLMs

The single thread running through everything we’ve learned is that LLMs need clarity to be confident about mentioning and citing your product in their answers.

Clarity in what your content says – your pages and posts are specific, structured, and self-explanatory.

Clarity in who you are – you are a consistent brand entity backed by third-party proof.

Clarity in how fresh your information is.

On top of that, none of it matters if LLMs can’t actually find and read your pages in the first place — so making your site technically accessible to their crawlers is the foundation everything else sits on.

You get these things right, and you’re already ahead of competitors who are still writing for traditional search alone, not thinking about the conversations happening inside LLMs.

To find out where your brand currently stands across LLMs and what it would take to start showing up, talk to us – we’ll audit your visibility and build you a roadmap.